Posted originally on CTH on February 28, 2026 | Sundance

A remarkable conflict has revealed itself amid the use of Artificial Intelligence (AI) software, the United States Government (USG), the Dept of War (DoW) and the AI software company Anthropic.

At the core of the issue is the USG contracting with Anthropic for the use of their Claude AI system for use in military operations. The Dept of War has a contract with Anthropic to use their software in combination with various military and weapon use systems. However, Anthropic is putting restrictions on the military application of their AI.

Anthropic says the AI cannot be used for defense dept autonomous weapons that do not utilize human triggering. Additionally, Anthropic is saying their system cannot be used to surveil U.S. citizens. Anthropic engineers would be the decisionmakers on the government use.

The Trump administration has rejected the demand of Anthropic, saying they will not permit a Silicon Valley group of engineers to determine deployment of U.S. applications, thereby replacing the decision-making of elected officials, military commanders, the Joint Chiefs’ of staff, and ultimately the President of the United States and even military servicemembers who are facing life or death decisions.

It is a key moment for the use of AI as it applies to government application and private sector.

♦ After several weeks of conflict between U.S. government officials and the CEO of Anthropic, Dario Amodei, President Trump finally said enough and told all agencies of government to stop using Anthropic products.

President Trump via Truth Social: “The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.

Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.

WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about. Thank you for your attention to this matter. MAKE AMERICA GREAT AGAIN!”

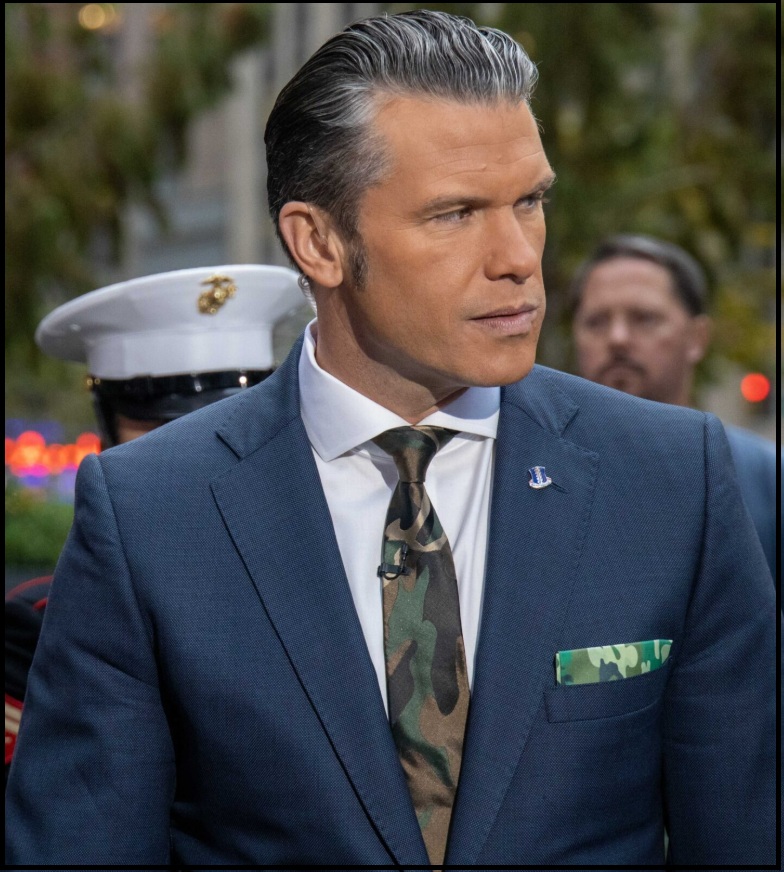

♦ Secretary of War Pete Hegseth then responded via X:

“This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic.

Instead, AnthropicAI and its CEO Dario Amodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission – a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.

The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield.

Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable.

As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives.

Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered.

In conjunction with the President’s directive for the Federal Government to cease all use of Anthropic’s technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.

America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.”

♦ Anthropic then responded:

“Earlier today, Secretary of War Pete Hegseth shared on X that he is directing the Department of War to designate Anthropic a supply chain risk. This action follows months of negotiations that reached an impasse over two exceptions we requested to the lawful use of our AI model, Claude: the mass domestic surveillance of Americans and fully autonomous weapons.

We have not yet received direct communication from the Department of War or the White House on the status of our negotiations.

We have tried in good faith to reach an agreement with the Department of War, making clear that we support all lawful uses of AI for national security aside from the two narrow exceptions above. To the best of our knowledge, these exceptions have not affected a single government mission to date.

We held to our exceptions for two reasons. First, we do not believe that today’s frontier AI models are reliable enough to be used in fully autonomous weapons. Allowing current models to be used in this way would endanger America’s warfighters and civilians. Second, we believe that mass domestic surveillance of Americans constitutes a violation of fundamental rights.

Designating Anthropic as a supply chain risk would be an unprecedented action—one historically reserved for US adversaries, never before publicly applied to an American company. We are deeply saddened by these developments. As the first frontier AI company to deploy models in the US government’s classified networks, Anthropic has supported American warfighters since June 2024 and has every intention of continuing to do so.

We believe this designation would both be legally unsound and set a dangerous precedent for any American company that negotiates with the government.

No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons. We will challenge any supply chain risk designation in court.” (source)

There are multiple alternative companies rapidly developing various AI models that could be used to replace the Claude system within the Dept of War. In fact, the DoW is likely to partner with Open AI as a replacement for the Anthropic contract.

However, this conflict about use is one that has not only erupted within Anthropic but has also surfaced within Palantir AI. Palantir CEO Alex Karp highlighted the issue in his own discussions about the Pentagon vs. Anthropic standoff.

“The core issue is who decides,” Karp says. The issue is not whether the use of the government use of AI is right; the issue is not whether you agree with the mission of the Dept of War. The real issue surrounds who will decide its use.

Karp notes, “It’s commonly known that our software is used in operational context at war.” “Do you really think the warfighter is going to trust a software company that pulls the plug because something becomes controversial?” Let that sit for a second. “Currently, when you’re a warfighter, your life depends on your software.” A group of tech engineers in Silicon Valley does not get to replace the decisions of the elected experts in national security and the commander in chief.