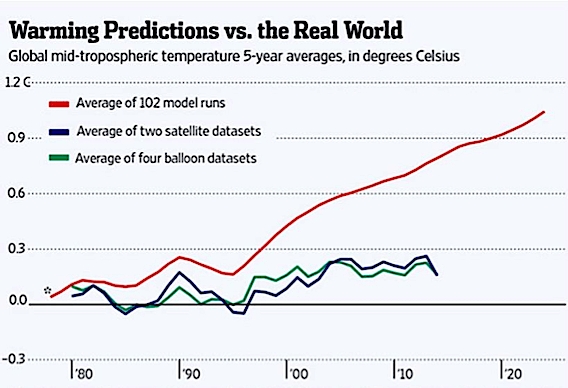

NOAA, NASA manipulates data to support the political agenda of the myth of manmade climate change otherwise known as Anthropogenic Climate change. And to keep their jobs the employees in the various agencies of the federal government have all been infected with the desire to keep their jobs i.e. publish data, tables and charts that purport to show that we are in the hottest year ever and that if we don’t tax carbon immediately that we are all going to die. The national media dutifully promotes this cause and those that believe in the cause of more taxes attack anyone that disputes the narrative calling them names such as a Flat Earther or Non Believer and other nastier names as suits them at the time. However there is a building problem in that the citizens of the country don’t see what the propaganda claims to be happening and as the disparity gets larger and larger every year the government and their minions, the national media, get more and more desperate.

Over the past several years I have been downloading a table of global temperatures that NASA publishes each month to use in my research. This table is identified as the Land Ocean Temperature Index (LOTI) which consists of a number which is generated by a very complex computer program. To calculate this table NASA first set an arbitrary global temperature by calculating a base global temperature from period from1951 to 1980 of 14.0 degrees Celsius (57.2 degrees Fahrenheit). Then they determine an anomaly by taking the temperature that they calculated subtracting 14.0 from it then they divide multiply the result by 100. This than gives a plus or minus value from base 0.0 which represents 14.0 degrees Celsius (C). This creates a whole number which is apparently easier for the scientists to work with. Example 14.5 would be an anomaly of 50 (.5 * 100). The only problem with this method is that the base is strictly arbitrary and can be any number one wants to use; but that doesn’t matter for what we’re going to talk about here.

As the federal agencies try to support what the Obama administration wants to promote, they have had to resort to data manipulation that has become so blatant that even non technical people are able to see that something is very wrong. One of the tricks that these agencies use is to change history. They do this in how they calculate their data and in the form of what they show, for example we have the following Chart of anomalies from three different plots of the LOTI monthly tables for the indicated years. The first is from December 1998 (blue line), the second from October 2009 (green line) and the third from December 2014 (red line) which is the lasted one available at the time this paper was written.

This plot was generated by break each indicated year into a ten year blocks of values and then creating an average for each block. By doing this we take out large changes in the monthly numbers; for example for the month of January 1980 from the LOTI issued in December 1998 the value was 30 and the LOTI value for the same month, January 1980, was 24 on the December 2014 report, which one was right? To my way of thinking some variances could be expected but they would cancel out with looking at blocks of numbers; this is not the case here. There is one other issue of note and that is when looking at this subject for the first time around 2005 the LOTI table went back to January 1980. Unfortunately at the time the methodology used by NASA was not understood by me, at the time, and the earlier tables were not saved. Each month as new data was published the current value was added to the table that had been developed. It never occurred to me that the published data was itself a variable. Why would numbers be changed constantly for if they are, of what value are they? Now that we understand how the numbers were derived lets analyze the Chart.

At this scale all three plot should be one on top the other, which they are not. Next it’s curious as to why NASA stopped publishing the anomalies prior to 1980. Also we can see that the plots prior to 1950 have major swings in them; and that the plots after 1980 do as well. Lastly why would the base temperature be calculated using the temperatures from 1951 to 1980 since there is a clear and large upward trend to the data?

The first things we’ll look at are the Blue and Green plots. Which follow each other reasonably with the only difference being dropping the numbers prior to 1980. What comes to mind is that those promoting anthropogenic climate changes did not want to show a decline in temperatures while carbon emissions were growing from 1860 through 1890. It would be interesting to see when this change was made; it wouldn’t be a surprise if it was in the period when James E. Hansen’s was put in charge of the Goddard Institute for Space Studies (GISS) section of NASA.

Next, what happened before 2014 that made such a large change in the red plot? Could it be that if we look at the plot from the periods from 1910 to 2000 there would be close to a full degree upward movement in temperature? Actually that change occurred sometime between October 2011and September 2012 but LOTI tables for that period have not been found yet so it is somewhere in those eleven months. If we go back to 1860 values that almost one degree increase drops by 1/3, is this intentional?

Lastly we look at the period from 2000 to the present. Interestingly it shows that the plot for October 2009 is higher than the plot for December 2014. The period from January 2000 to December 2009 was 62 on the October 2010 report. The same period was 55 on the December 2014 report which is enough to make 2014 hotter. Was this done so the Obama administration could say that 2014 was the hottest years ever?

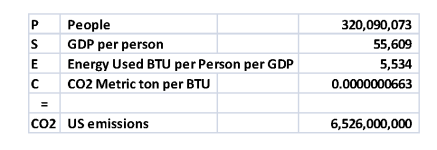

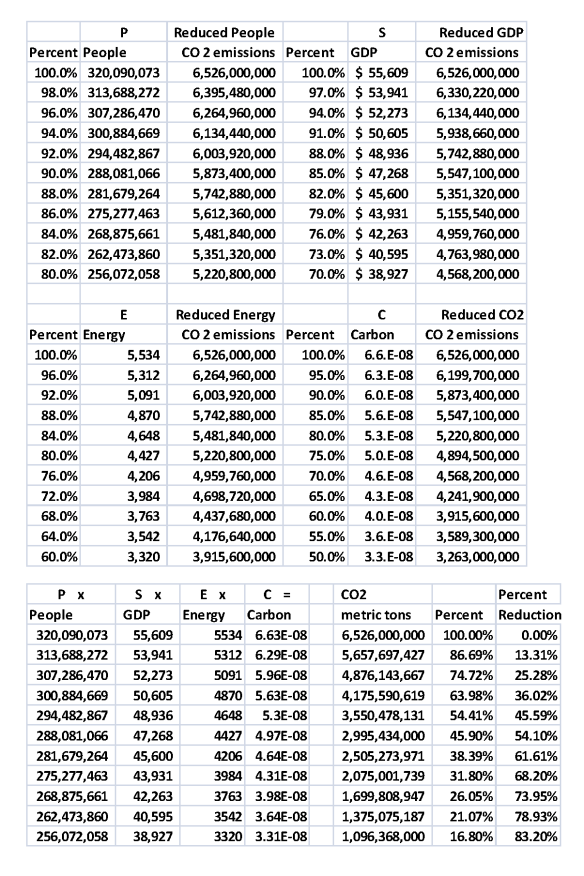

For reference the following table is what was used to make the plot.