Armstrong Economics Blog/Agriculture Re-Posted Jan 27, 2023 by Martin Armstrong

Food shortages have historically contributed to revolutions more so than just international war. Poor grain harvests led to riots as far back as 1529 in the French city of Lyon. During the French Petite Rebeyne of 1436. (Great Rebellion), sparked by the high price of wheat, thousands looted and destroyed the houses of rich citizens, eventually spilling the grain from the municipal granary onto the streets. Back then, it was to go get the rich.

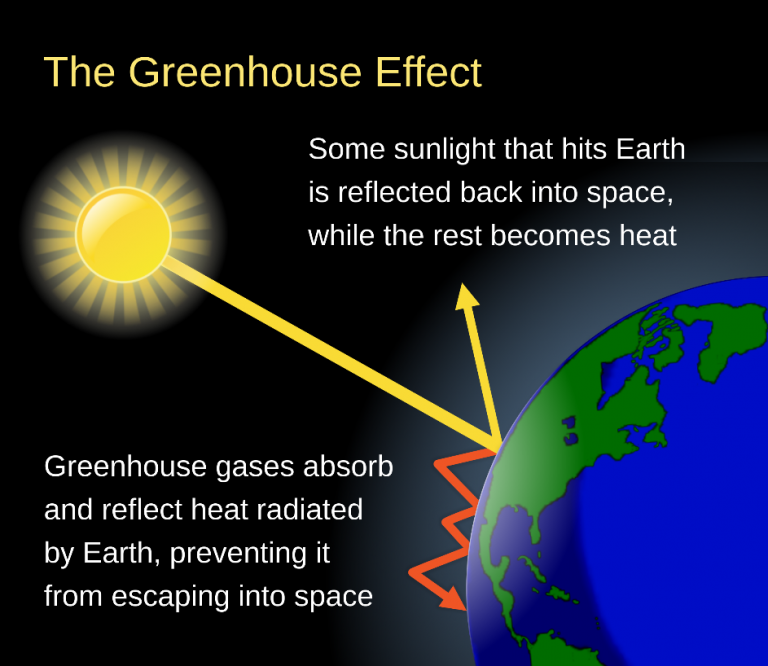

There was a climate change cycle at work and today’s climate zealots ignore their history altogether for it did not involve fossil fuels. The climate got worse at the bottom of the Mini Ice Age which was about 1650. It really did not warm up substantially until the mid-1800s. During the 18th century, the climate resulted in very poor crops. Since the 1760s, the king had been counseled by Physiocrats, who were a group of economists that believed that the wealth of nations was derived solely from the value of land and thereby agricultural products should be highly priced. This is why Adam Smith wrote his Wealth of Nations as a retort to the Physiocrats. It was their theory that justified imperialism – the quest to conquer more land for wealth; the days of empire-building.

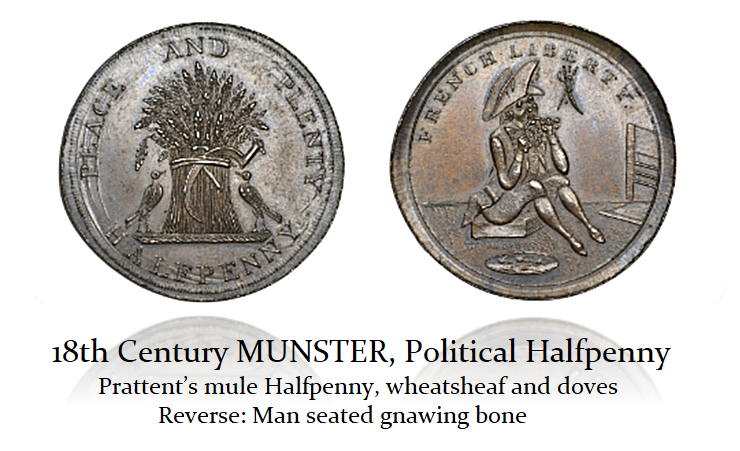

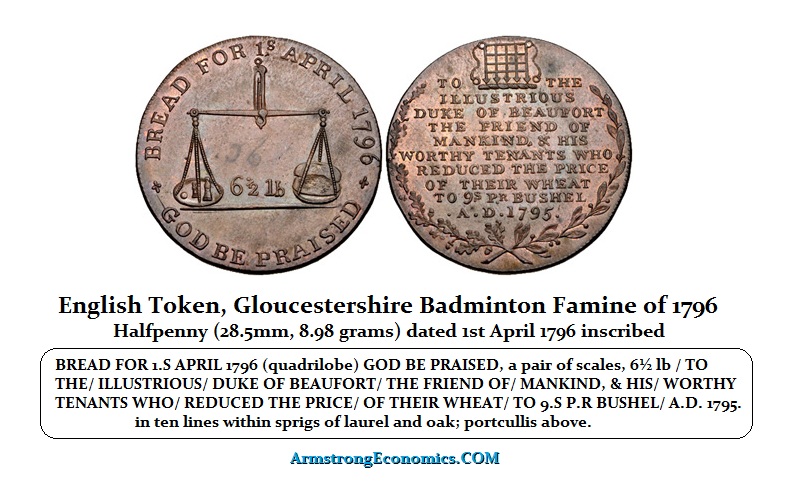

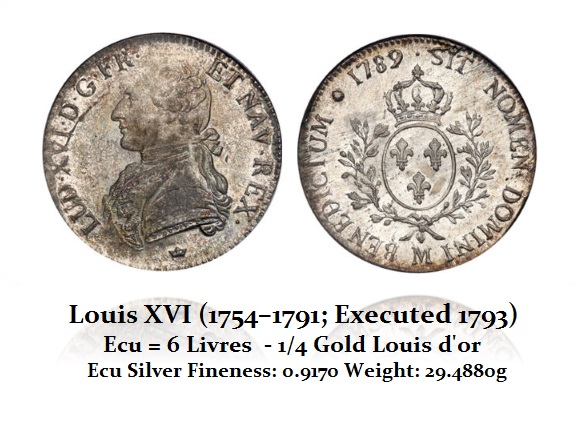

The King of France had listened to the Physiocrats who counseled him to intermittently deregulate the domestic grain trade and introduce a form of free trade. That did not go very well for there was a shortage of grain and this only led to a bidding war – hence the high price of wheat. We even see English political tokens of the era campaigning about the high price of grain and the shortage of food to where a man is gnawing on a bone.

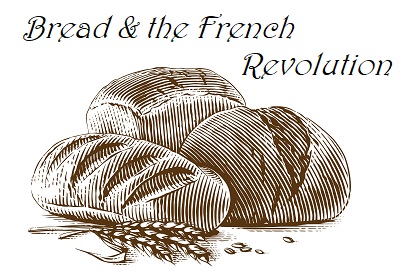

Voltaire once remarked that Parisians required only “the comic opera and white bread.” Indeed, bread has also played a very critical role in French history that is overlooked. The French Revolution that began with the storming of the Bastille on July 14th, 1789 was not just looking for guns, but also grains to make bread.

The price of bread and the shortages played a very significant role during the revolution. We must understand Marie Antoinette’s supposed quote upon hearing that her subjects had no bread: “Let them eat cake!” which was just propaganda at the time. The “cake” was not the cake as we know it today, but the crust was still left in the pan after taking the bread out. This shows the magnitude that the shortage of bread played in the revolution.

In late April and May of 1775, the food shortages and high prices of grain ignited an explosion of such popular anger in the surrounding regions of Paris. There were more than 300 riots and looking for grain over just three weeks (3.14 weeks). The historians dubbed this the Flour War. The people even stormed the place at Versailles before the riots spread into Paris and outward into the countryside.

The food shortage became so acute during the 1780s that it was exacerbated by the influx of immigration to France during that period. It was a period of changing social values where we heard similar cries for equality. Eventually, this became one of the virtues on which the French Republic was founded. Most importantly, the French Constitution of 1791 explicitly stipulated a right to freedom of movement. It was mostly perceived to be a food shortage and the reason was the greedy rich. Thus, a huge rise in population was also contributed in part by immigration whereas it reached around 5-6 million more people in France in 1789 than in 1720.

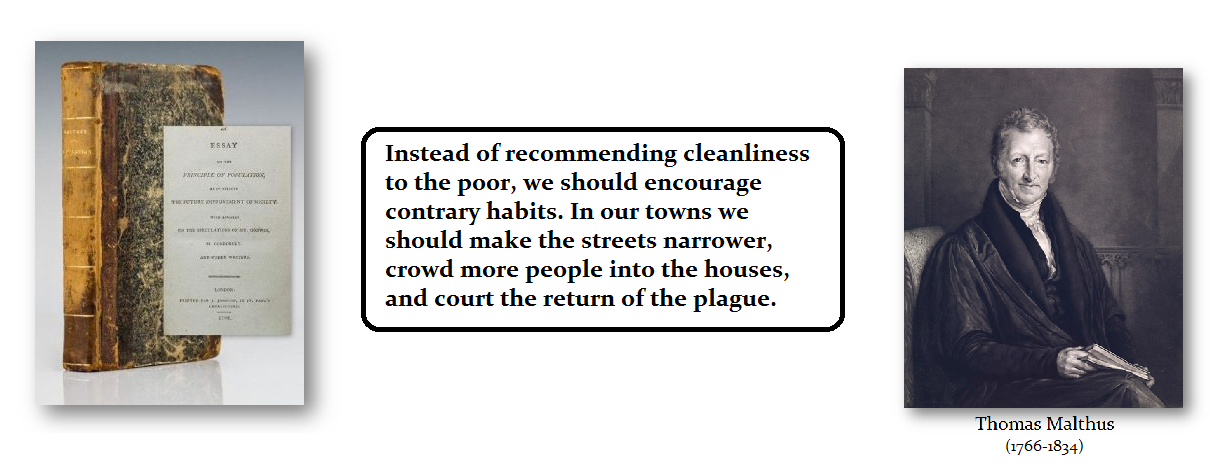

Against this backdrop, we have the publication by Thomas Malthus (1766-1834) An Essay on the Principle of Population was first published anonymously in 1798. He theorized that the population would outgrow the ability to produce food. We can see how his thinking formed because of the Mini Ice Age that bottomed in 1650. All of this was because of climate change which instigated food shortages. Therefore, it was commonly accepted that without a corresponding increase in native grain production, there would be a serious crisis.

The refusal on the part of most of the French to eat anything but a cereal-based diet was another major issue. Bread likely accounted for 60-80 percent of the budget of a wage-earner’s family at that point in time. Consequently, even a small rise in grain prices could spark political tensions. Because this was such an issue, and probably the major cause of the French Revolution among the majority, Finance Minister Jacques Necker (1732–1804) claimed that, to show solidarity with the people, King Louis XVI was eating the lower-class maslin bread. Maslin bread is from a mix of wheat and rye, rather than the elite manchet, white bread that is achieved by sifting wholemeal flour to remove the wheatgerm and bran.

That solidarity was seen as propaganda and the instigators made up the Marie Antoinette quote: Let them eat cake. . Then there was a plot drawn up at Passy in 1789 that fomented the rebellion against the crown shortly before the people stormed the Bastille. It declared “do everything in our power to ensure that the lack of bread is total, so that the bourgeoisie are forced to take up arms.”

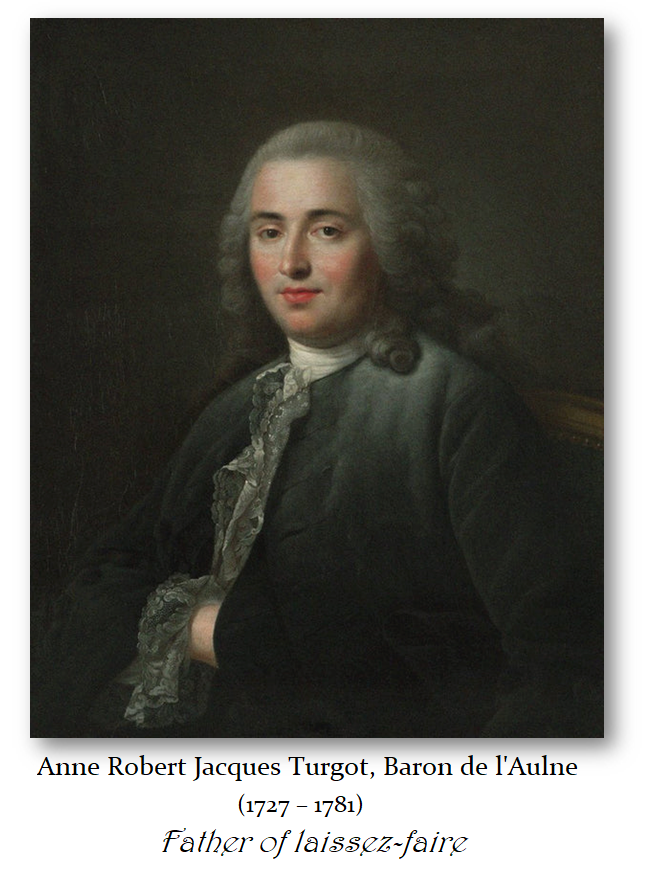

It was also at this time when Anne Robert Jacques Turgot (1727-1781), Baron de l’Aulne, was a French economist and statesman. He was originally considered a physiocrat, but he kept an open mind and became the first economist to have recognized the law of diminishing marginal returns in agriculture. He became the father of economic liberalism which we call today laissez-faire for he put it into action. He saw the overregulation of grain production was behind also contributing to the food shortages. He once said: “Ne vous mêlez pas du pain”—Do not meddle with bread.

The French Revolution overthrew the monarchy and they began beheading anyone who supported the Monarchy and confiscated their wealth as well as the land belonging to the Catholic Church. Nevertheless, the revolution did not end French anxiety over bread. On August 29th, 1789, only two days after completing the Declaration of the Rights of Man and of the Citizen, the Constituent Assembly completely deregulated domestic grain markets. The move raised fears about speculation, hoarding, and exportation.

Then on October 21st, 1789, a baker, Denis François, was accused of hiding loaves from sale as part of a conspiracy to deprive the people of bread. Despite a hearing which proved him innocent, the crowd dragged François to the Place de Grève, hanged and decapitated him, and made his pregnant wife kiss his bloodied lips. Immediately thereafter, the National Constituent Assembly instituted martial law. At first sight, this act appears as a callous lynching by the mob, yet it led to social sanctions against the general public. The deputies decided to meet popular violence with force.

So, food has often been a MAJOR factor in revolutions. We are entering a cold period. Ukraine has been the breadbasket for Europe. Escalating this war will also lead to accelerating the food shortages post-2024. It is interesting how we learn nothing from history. Wars are instigated by political leaders while revolutions are instigated by the people.